Introduction

In February 2026, FDA Commissioner Makary and CBER Director Prasad published a policy statement in the New England Journal of Medicine declaring that FDA's default position going forward is one adequate and well-controlled study, combined with confirmatory evidence, as the basis for marketing authorization. FDA has held statutory authority under the FD&C Act to approve drugs on a single adequate and well-controlled investigation since 1997, and has exercised that authority in oncology and rare disease for years. What the February 2026 statement does is extend that standard broadly — across therapeutic areas and indication types — as the operational default rather than the exception.

The scientific and regulatory debate around that shift will continue. The operational consequence is immediate and precise: when there is no second study, every execution failure in the first one is permanent.

That consequence is not primarily a monitoring problem or a quality management problem. It is an engineering problem — specifically, the problem of how a clinical protocol, written as a document, gets translated into a software system that executes it deterministically across sites, vendors, and data streams. The gap between those two things — the protocol as written and the protocol as executed — is where single-study programs are lost.

What the Single-Trial Default Changes

The two-study standard that has governed drug approval for most of the past 50 years created a structural redundancy in clinical evidence. A protocol deviation or data inconsistency in Study 1 could, in principle, be addressed in Study 2. Site-level variation in eligibility interpretation had a second opportunity to be corrected. Statistical concerns raised by a marginal primary endpoint could be answered by replication.

That redundancy shaped how sponsors thought about operational risk. Data cleaning cycles, post-hoc eligibility reviews, and retrospective deviation assessments were expensive and undesirable, but they were not necessarily terminal. The second study existed as a recovery mechanism.

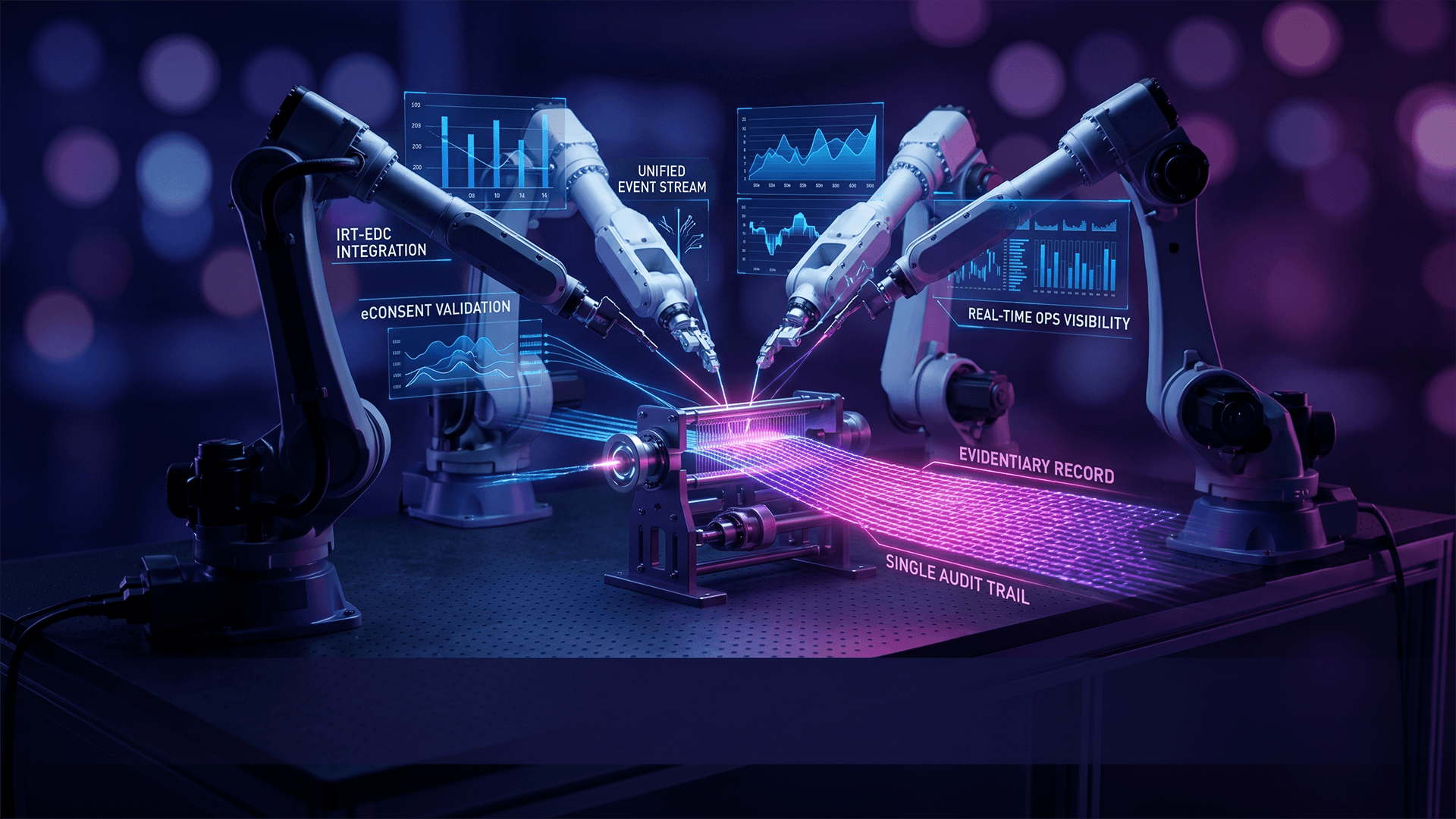

Under a single-study default, that recovery mechanism is gone. The dataset produced by the single adequate and well-controlled study is the evidentiary record. Its integrity, completeness, and fidelity to the protocol are not subject to confirmation or correction by a subsequent program. The FDA's assessment of safety and effectiveness rests entirely on what that one study produced.

This does not change the science of drug development. It changes the consequence of every operational failure within it.

The Protocol Translation Gap

Every clinical trial involves a translation process that runs in three stages. The protocol describes the study scientifically — its objectives, population, endpoints, visit schedules, and operational rules. A data system attempts to implement that protocol operationally — configuring forms, edit checks, workflows, and validation rules. Site staff interpret and execute the study within that system — enrolling patients, capturing data, and making real-time decisions about eligibility and procedure compliance.

At each stage, ambiguity accumulates.

A protocol that specifies "patients with moderate-to-severe disease as defined by a validated scale score of ≥12 at screening" requires that criterion to be enforced at the point of data entry, before randomization, across every site. If the system cannot evaluate that condition programmatically — if it stores the score but does not gate enrollment on it — then enforcement depends on site coordinator judgment. Across a 40-site study, coordinator judgment is not a consistent control.

A visit schedule with branching conditional logic — "if the participant experiences Grade 2 or higher adverse event, perform unscheduled safety assessment within 72 hours" — requires the system to recognize that trigger and initiate the appropriate workflow. If the trigger is recorded in one part of the system but the workflow is managed manually in another, the connection is only as reliable as the human who makes it.

These are not edge cases. They describe the operational reality of most clinical trials built on electronic data capture systems that were designed for data collection rather than protocol execution.

In a two-study world, the aggregate effect of these translation failures is protocol deviations — quantifiable, reportable, and partially recoverable. In a single-study world, protocol deviations are not a compliance metric. They are a scientific validity question. Ineligible patients in the primary analysis population, missed assessments in the endpoint data, and inconsistently applied visit windows all introduce noise into the dataset that cannot be retrospectively corrected.

The Eligibility Problem

Eligibility enforcement deserves specific attention because it represents the highest-concentration point of execution risk in a single-study program.

The statistical design of a clinical trial is built around the enrolled population. The power calculation, the assumed effect size, and the primary analysis specification all presume that the patients randomized into the study meet the inclusion and exclusion criteria as written. When patients who do not meet those criteria enter the study — not because of fraud or negligence, but because the system could not evaluate complex conditional eligibility logic — the analysis population diverges from the design population.

The magnitude of that divergence matters. A 3–5% ineligible patient rate, distributed randomly across arms, introduces sufficient noise into a modestly powered study to shift a p-value across a significance threshold. In a Phase 2 or Phase 3 program with n=200–400 participants, that range of ineligibility is not hypothetical — it has been documented in inspections and post-marketing reviews as a consequence of system-level enforcement failures rather than site misconduct.

In a single-study program, that shift in p-value has no correction mechanism. The study either demonstrates what the protocol intended or it does not.

The engineering response to this risk is deterministic eligibility enforcement — the translation of inclusion and exclusion criteria into executable system logic that evaluates each criterion programmatically at the point of data entry, prevents enrollment of ineligible patients before randomization, and generates an immutable audit record of every eligibility determination. This is not an edit check. It is protocol logic that the system enforces, rather than flags for human review after the fact.

The Engineering Response

The broader engineering challenge is translating a protocol — all of it, not just eligibility — into platform behavior that is deterministic, auditable, and consistent across every site and every participant interaction.

Behavior-Driven Development (BDD) is the methodology that makes this translation explicit. Rather than configuring a data collection system and relying on site training to bridge the gap between the protocol and the system, BDD translates protocol rules into plain-language specifications that are simultaneously human-readable and machine-executable. A specification written in BDD describes what the system must do — "Given a participant with a screening score ≥12 AND no exclusionary comorbidities, when randomization is triggered, then the participant is enrolled and the randomization record is generated" — and automated testing continuously validates that the system's behavior matches those specifications.

This approach has two operational consequences. First, ambiguity in protocol translation is surfaced during system configuration, before the first patient is enrolled, rather than during monitoring or data review. Second, the specifications themselves constitute living documentation — a shared, version-controlled rule set that sponsors, CROs, and the platform team can interrogate throughout the trial lifecycle.

When protocol logic is implemented this way, the event-driven audit trail becomes a byproduct of execution rather than a reconstruction effort. Every eligibility determination, every workflow trigger, every data entry event that initiates or completes a protocol-defined action is captured in real time as an immutable record linked to the specification it executed against. An FDA inspector reviewing a deviation can trace not just what happened but why — what system state triggered the action and what protocol rule governed it.

This is the architecture that single-study evidentiary standards demand. Not because FDA requires a particular platform design, but because the evidentiary weight of a single adequate and well-controlled study requires that the study was actually adequate and actually well-controlled — at every site, for every participant, from first enrollment to database lock.

Infrastructure Decisions Before First Patient In

The upstream engagement point matters as much as the platform architecture itself. Protocol translation failures are substantially harder to remediate after a study is live than to prevent before configuration begins.

When a clinical data platform engages during protocol development — before regulatory submission, before system configuration, before site activation — the protocol's operational executability can be assessed against the platform's logic layer. Inclusion and exclusion criteria with conditional dependencies can be reviewed for programmatic enforceability. Visit schedule branches can be mapped to event-driven triggers. Vendor data dependencies can be identified and protocol-aware integration logic can be designed before the study goes live.

This upstream review is not a quality assurance exercise. It is a study design activity. The question it answers is whether the scientific intent of the protocol can be implemented deterministically in the execution environment — and if not, where the translation gap is and what protocol or system modification resolves it.

For sponsors running a single pivotal program, the answer to that question before enrollment begins is the difference between a dataset that supports the intended analysis and one that requires extensive post-hoc adjudication. Post-hoc adjudication is not impossible, but in a single-study program it is exactly the kind of analytical decision-making that invites regulatory scrutiny of the evidentiary standard the study was designed to meet.

Conclusion

FDA's shift to a single adequate and well-controlled study as its default evidentiary standard is a regulatory policy change. Its operational consequence is an engineering imperative. When there is no second study, the fidelity of protocol execution is not a compliance objective — it is the scientific foundation on which the entire development program rests.

The protocol translation gap — the distance between a protocol as written and a study as executed — has always introduced operational risk into clinical development. Under a single-study default, that risk has nowhere to go. It accumulates directly in the dataset, and the dataset is the submission.

The infrastructure that closes this gap is not fundamentally different from infrastructure that has always represented best practice in clinical trial execution. But in a single-study world, the consequence of not having it is different. The question for every emerging biopharma sponsor choosing their clinical data platform is whether that platform was built to collect data or built to execute a protocol — and whether that distinction matters for the program they are about to run.

It does.

Note: This post reflects the February 2026 FDA policy statement by Commissioner Makary and CBER Director Prasad published in the New England Journal of Medicine. The single-study with confirmatory evidence standard is the agency's stated default position. Formal guidance on this standard exists separately as a draft. Sponsors should consult regulatory counsel on how this policy shift applies to their specific development programs.